I started drawing on a computer in the early 1990s with AutoCAD R10.

Back then it was black screens, command lines, coordinates instead of cursors. If you wanted to express an idea visually, you did not drag shapes around. You constructed it, line by line, with the same kind of concentration you would use writing a careful spec.

That early experience baked in a bias I still carry.

A diagram is not decoration. It is a claim about how reality is structured.

And the first claim is almost always wrong.

Since then, I’ve used just about every diagramming flavor I could get my hands on: house plans, network diagrams, process flows, mind maps, org charts, UML, crow’s-foot schemas, and enough hours in Visio to qualify for a minor emotional settlement.

Some tools were elegant. Many were painful. Nearly all of them charged the same tax: the cost of translating meaning into visuals.

For most of that time, the friction was not in the thinking. It was in the translation.

The hardest part was rarely understanding the idea. It was making the picture behave.

You had to pick the tool. Pick the shapes. Route the lines. Fight the canvas. And hope the final result looked enough like the thing in your head that someone else could understand it without a guided tour.

That worked. Sometimes. But it was expensive — not just in money, but in attention, effort, and delay. It was a Manual Labor Tax on every idea that deserved a visual but got a paragraph instead.

That tax is why people default to text, even when text is a lousy medium for structure. That is how we ended up with the modern classics: the 47-bullet Slack message, the “quick summary” that reads like a novella, and the doc everyone bookmarks and nobody finishes.

We did not lack expertise. We lacked compression.

If you’ve ever opened Visio with a fully formed thought in your head and still wondered, How in the hell am I supposed to draw this thing? — you already know the tax.

From Drawing to Modeling

About eight months ago, ChatGPT coughed up a visual I didn’t ask for. It wasn’t an image. It wasn’t a screenshot. It was code. It was a text-based diagram. A flowchart. A skeleton of boxes and arrows that looked like it had been drawn in Visio but rendered in monospace.

I asked the only reasonable question: What the hell is that?

It replied: A Mermaid diagram.

I asked Copilot to generate one too. Then I discovered Microsoft Loop could render Mermaid natively. Suddenly I wasn’t “drawing” in the old sense anymore. I was describing structure in words and letting the rendering happen downstream.

That changed the economics. Mermaid plus LLMs turned diagramming from click-drag labor into text-native iteration. I could throw spaghetti against the wall at high velocity and minimal drag, then tighten the structure after the fact.

ROY: Return on Your Words

Once the rendering cost collapsed, the real question became unavoidable: What did the words actually buy?

The ROY Formula

Clarity Delivered ÷ Words Required to Deliver It

Return on Your Words = understanding extracted ÷ words invested

A 47-bullet Slack message? Poor ROY. A “quick summary” that still requires a meeting to decode? Negative ROY. A diagram generated from 50 well-chosen words that a team grasps in five seconds and uses correctly? Potentially extraordinary ROY.

That is why I don’t believe a picture is automatically worth 1,000 words. Some pictures are decorative wallpaper with a superiority complex. Some diagrams are just confusion with borders and connector lines.

The real test is simpler: Did the visual remove enough confusion to justify the effort it took to make it?

A picture is only worth the words it saves, the ambiguity it kills, and the momentum it creates.

The first diagram usually fails that test. It wants to look finished. It wants to reassure you that the path is known, the thinking is done, and the complexity has been tamed. Real thinking is uglier than that.

Real thinking loops. It forks. It backtracks. It trims its own nonsense. It discovers, usually late, that the clever version and the useful version are not the same thing.

Attention Is Finite (and Text Hides Flow)

People do not fail to read because they are lazy. They fail to read because their capacity to absorb meaning is finite and the workday is a relentless attention shredder. Every meeting, every notification, every summary that is really just a longer version of the thing it was supposed to replace — they all draw from the same account. By the time someone reaches your explanation, they may already be overdrawn.

Text handles nuance and precision well. What it handles poorly is flow. A paragraph can describe a process sequentially, but the reader has to do all the structural work themselves — building the mental model one sentence at a time, inferring the branches, guessing where the loops are, deciding which steps are parallel and which are dependent. None of that labor shows up in the word count. It shows up in the meeting you scheduled for next Tuesday to clear up the confusion from the doc you sent on Monday.

The most expensive part of a text explanation is not writing it. It is the reconstruction tax every reader pays silently on the other end.

You can watch this happen in real time. Someone nods through the walkthrough. They ask a clarifying question that reveals they assembled a completely different machine in their head. Nobody is stupid. The medium simply did not carry the structure. The words moved. The shape of the thing did not.

This is where diagrams earn their keep — not by being decorative, not by making slides look serious, but by doing what text cannot: externalizing the shape so no one has to reconstruct it from scratch. A good diagram hands the reader a pre-built mental model. The cognitive cost still exists, but it is paid once, in the making, rather than thousands of times, in the reading.

The catch is that most diagrams do not do this. Most diagrams are not honest.

Most diagrams are optimism with arrows. They erase the messy edges because the author wants to look decisive, or because the tool quietly punishes complexity.

There is a specific kind of pressure that pushes diagrams toward tidiness that has nothing to do with the underlying reality. Some of it comes from the tool — Visio, Lucidchart, slide decks — which reward clean alignment and punish irregular shapes. Some of it comes from the audience: nobody wants to present a diagram that looks confused, even when confusion is the accurate state of the work. And some of it comes from the author’s own desire to perform certainty before they have earned it.

So the feedback loops get removed. The exception paths disappear. The revision cycles that actually happened get replaced with a single clean arrow. The diagram stops being a model of how the work moved and becomes a model of how the work should have moved, in a world where thinking is linear, no one changes their mind mid-project, and the first answer was always the right one.

That is why the first diagram is usually a liar.

Not maliciously. Prematurely.

It shows what we hope is true before we have done enough work to see what is actually there. And because it looks finished — clean shapes, labeled arrows, consistent formatting — it is easy to mistake the artifact for the understanding. The ROY on a lying diagram is deeply negative. It creates false confidence, forecloses questions that should still be open, and charges the organization the reconstruction tax anyway — just later, at higher cost, once reality disagrees with the diagram.

What a Mermaid Diagram Actually Is

Mermaid is not AI.

That sentence needs to land before anything else in this article makes sense, because if you carry the wrong assumption into the council comparison, the entire experiment looks like something it isn’t.

A Mermaid diagram is text. Structured syntax. A specific grammar that a JavaScript library reads and renders into a visual diagram inside a browser. When I say I put AI platforms through the same diagramming exercise, I’m not talking about image generation. I’m not talking about a model painting a flowchart. I’m talking about plain text that follows rules, and the diagram that appears on screen is what that text decided to be.

The closest analogy is an SVG file. An SVG isn’t a photograph. It isn’t a rendered image. It’s source code: coordinates, shapes, fills, and stroke instructions that a browser interprets and displays as something visual.

Mermaid works the same way. And here’s the test that settles it:

If a stubborn enough person can sit down with a plain text editor and type the structure by hand, the structure itself is not AI. It is text.

A Mermaid file passes that test. Here’s a simple one in its raw form:

flowchart LR

A[Request Submitted] --> B{Approved?}

B -- Yes --> C[Schedule Deployment]

B -- No --> D[Return to Requester]A human typed that. It renders into a clean, publication-ready flowchart. No model involved. No API call. No generation. The same way a human can write an HTML file in a text editor and open it in a browser, a human can write a Mermaid file and render it in the Mermaid Live Editor.

That source-first quality is the part people miss. A Mermaid diagram is inspectable. It can live in source control. It can be diffed. It can be versioned. It can be reviewed the way you review any other structured artifact. If the diagram changes, you can often see exactly where the meaning changed. A label was tightened. A branch was added. A decision point was removed. That is a very different thing from treating a diagram like a frozen screenshot that nobody wants to touch.

It also means the format itself stays neutral. Human authors can write it. AI systems can write it. Teams can pass it back and forth between both. The authorship changes. The syntax does not.

That extensibility goes further than the core library. BPMN for Mermaid builds on the same text-first syntax to add a native business process diagram type — same source-control-friendly model, same LLM-generatable format, now with BPMN 2.0 semantics.

Mermaid the Syntax vs Mermaid.ai the Platform

Mermaid the syntax is a language.

Mermaid.ai the platform is one AI-assisted on-ramp to that language.

Those are not the same thing. Mermaid.ai is a generative AI product purpose-built to help you produce Mermaid code faster. ChatGPT, GitHub Copilot, Microsoft Copilot, and a growing list of extensions can all generate Mermaid syntax on request. None of them invented the format. They accelerate authorship of it.

The Council of AIs comparison wasn’t testing which AI could generate the prettiest picture. It was testing which AI produced the most accurate, most maintainable, most structurally sound Mermaid code from an evolving brief. The diagram is the visible output. The code is the work product. The AI is the author under evaluation.

A Mermaid diagram is not AI. It is text. The same way an HTML file is not AI, a spreadsheet is not AI, and a list of tasks in Notepad is not AI — unless a machine wrote it. And even then, the format itself is neutral.

That framing matters, because it changes the standard. The winning system is not the one that makes the flashiest picture fastest. It is the one that best translates thought into durable structure.

The One That Should Have Won

Mermaid.ai was the obvious ringer.

Its entire reason for existing is to produce exactly what I was asking every other council member to produce. If any platform should have dominated a Mermaid diagram shootout, it’s the one with “Mermaid” in the name. It was the biggest failure of the entire test. Not because it can’t draw. Because it couldn’t wait.

The failure has a name: premature rendering.

The moment Mermaid.ai detected diagram intent, it executed. No hold state. No absorption phase. I was still building context across multiple prompt turns: narrative first, system logic second, relationship mapping third. By the time I’d finished, Mermaid.ai had already produced three diagrams from the first two sentences. They weren’t wrong. They were premature. Built on an incomplete read of a brief that wasn’t finished yet.

That distinction matters. A wrong diagram can be corrected. A premature diagram is more dangerous because it often looks plausible. It has enough surface coherence to feel done, even though the underlying read was still shallow. That is exactly how the first diagram lies. Not by hallucinating nonsense, but by freezing partial understanding into something that looks authoritative before it has earned the right to.

Speed is not the same thing as synthesis.

Every other council member — Claude, ChatGPT, Copilot, Gemini, Notion, Perplexity, and Replit — supports deliberate context-building across multiple turns. You can withhold the draw instruction until the model has enough to work with. That loop is the core workflow for complex diagramming. Mermaid.ai skips it.

And that is not a small workflow preference. It is the whole ballgame. In simple drafting, eagerness feels helpful. In architecture, process design, and recursive ideation, eagerness can become distortion. The system starts optimizing for visible output before it has done the quieter work of understanding what is actually being asked.

My recommendation to the Mermaid team, offered as a product observation rather than a complaint: build a Think mode. A context-intake phase where users can feed narrative, files, system logic, and evolving intent across multiple turns without triggering generation. Then flip the switch.

Hold. Hold. Hold. Go.

That sequence is not hesitation. It is discipline. It is the difference between drafting a shape and understanding a system.

The irony stays with me: the Council of AIs was assembled specifically to generate Mermaid diagrams. The platform purpose-built to generate Mermaid diagrams is not a member of the council. It is rendering before the idea is ready. That is the result that has stayed with me longest.

The slide deck is now live, and that changes the status of the argument. Up to this point, this article has been doing the work in prose. v0.3 is the moment the experiment leaves prose-only mode and becomes a visible field for comparison, judgment, and public scoring. The Council produced the diagrams. The deck now puts them side by side. What had previously been a claim about diagramming can now be inspected, compared, and judged in public. That shift matters because the whole ROY argument has always been about whether a visual earns the words that produced it. If a diagram cannot create enough clarity to justify the explanatory cost, it does not matter how polished it looks. It is still overhead. The visual edition lets that question stop being theoretical. Instead of asking readers to trust my conclusion, it lets them see the field, compare the outputs, and decide what actually communicates. That is also why v0.3 became a public scoring launch instead of just a deck drop. The first-pass diagrams and the revised diagrams moved into audience adjudication. Round 1 split into two heats: ChatGPT, Claude, Gemini, and Perplexity in one bracket; Copilot, Notion, and Replit in the other. Round 2 did the same with the revised field: ChatGPT, Copilot, Gemini, and Notion in one heat; ChatGPT Pro, Claude, Replit, and Perplexity in the other. The point was not tournament format. It was comparison at scale, with the same brief, the same deliverables, and enough outputs to see a pattern. The audience vote was not the final answer. It was one signal. The final poll closed May 7. The audience lane returned thin — 1,735 impressions, 5 total votes across all four heats, a 0.29% conversion rate. The finding was funnel friction, not reach failure: people were willing to look; the five-step journey from poll post to vote was too much to ask of a feed-based interaction. The architect lane delivered: Copilot V1 won Round 1; Claude V2 won Round 2. The Council scoring lane arrived in v0.5. The deck was my first read. The polls were the audience read. The funnel is what tells the real story. The v0.3 deck is public in the project repository. All 18 diagrams, both V1 and V2 rounds. Available in two formats. Both formats contain the same 18 diagrams across V1 and V2 rounds. View full repository → All seven models responded to the same brief. These are the first-pass diagrams before critique, constraint, or revision. Presentation-ready system thinking. Strong architecture, clear phases, deliverable feel. Elegant storyteller — occasionally too polished. Narrative intelligence, strong framing. Clarity, hierarchy, and a little swagger. Balanced composition, strong loops, polish and branding. Less theater, more utility. Pragmatic structure, ROI thinking, business framing. Elegance from insight — or just restraint? Synthesis and reduction, simplifies without flattening. Quietly effective. Clean lanes, minimalist, setup/compression/shipping separation. The builder’s eye. Code-first thinking, runtime honesty, builder pragmatism. Diagrams rendered with Mermaid.js. View them at full resolution. Try Mermaid Chart → if you want to explore the syntax yourself — that is a separate path from the diagram links above. After critique, constraint, and more context. V2 is not first instinct — V2 is adaptation. Thinks beautifully. Earns the elegance through honest recursion rather than decorating it on top. Architecture preserved. Emotion and discipline. Boardroom-ready if flair does not damage structure. Denser reasoning. Explicit loop logic with brand and mechanics. More deliberate, less merely clever. Art of the possible — feature-rich and theatrical. Pushes Mermaid further. Diagram or poster? Can pragmatism also become beautiful? Visually memorable, clarity under complexity. Sharper code sense, same honest question. Render-tested builder’s restraint. Diagrams rendered with Mermaid.js. View them at full resolution. Try Mermaid Chart → if you want to explore the syntax yourself — that is a separate path from the diagram links above. The audience vote was not treated as the only answer. It was treated as one signal. The polls ran. That signal came back thin. The scoring breakdown compared three lanes: The gap between those lanes is exactly where the interesting lesson lives. The v0.3.3 recap below starts there. The LinkedIn deck compresses the visual field. These guides give each poll heat its own page with higher-resolution diagram links and full context for each competitor. The v0.3.x debrief compared three scoring methodologies. Results below. 1,735 impressions, 5 total votes, 0.29% conversion across four heats. Funnel friction diagnosis: five-step user journey attached to a one-tap poll interaction. Thin signal. Round 1: Copilot V1. Renderer-level YAML configuration — operating on the theme engine itself, not just styling nodes. Evaluated against: The field is comparable and the architect reads hold. Round 1 consensus: Copilot V1. All five scoring members rated it first on structural clarity. The differentiator was the same one the architect identified: renderer-level YAML configuration, not just node styling. Round 2 divergence: Claude V2 topped narrative architecture reads; Copilot V2 topped structural reads; ChatGPT V2 topped completeness reads. The architect picked Claude V2. The council split on criteria. Meta-finding: Every model rated its own submission lower than the architect did. Across all five scoring members, across both rounds. See The Council Scores the Field ↓. v0.3 is complete. The deck is out. The four polls ran. The field is built. What I learned is more precise than "the polls underperformed." The field got attention. The vote did not scale. That is not a reach problem. It is a funnel problem. Across the four poll posts, the series pulled 1,735 impressions and produced 5 total votes. That is an overall vote-conversion rate of roughly 0.29%. Comment-1, which carried the field-guide link and deeper context, drew 247 total impressions across the four polls. That means only about 14.2% of poll-post viewers ever even reached the routing layer. Poll impressions: 602 Votes: 2 Comment-1 impressions: 78 Poll impressions: 422 Votes: 1 Comment-1 impressions: 79 Poll impressions: 346 Votes: 1 Comment-1 impressions: 58 Poll impressions: 365 Votes: 1 Comment-1 impressions: 32 The scoring instrument asked too much of a feed-based interaction: That is a high-friction funnel attached to a low-bandwidth action. In ROY terms, the Return on Your Words for the poll mechanic was weak. The audience showed up to look. They did not move far enough down the funnel to adjudicate. That does not invalidate the work. It clarifies what the work actually is. The real output of v0.3 is not a crowned winner. It is the field itself: side-by-side artifacts that make visual communication more inspectable, more comparable, and less vibe-based. The audience lane came back thin. The architect lane still holds. v0.4 introduced the Council: who each member is, why they were there, and what the synthesis produced that no single model could have reached alone. v0.5 added the Council’s own scoring verdicts and the individual model self-interviews. See The Council Scores the Field ↓. If you only do one thing: inspect the field and leave one sentence on why one diagram worked. Reasons compound. Votes do not. By the time I had a working draft of the first diagram, I had already run the same brief through several AI platforms. That was not the original plan. It started as impatience. I asked one model for a Mermaid diagram of the ideation process behind a LinkedIn post. It came back too clean. Too linear. Too confident. It looked like a happy path, and I have spent enough time around real processes to know that happy paths are a form of fiction. So I asked another model. Then another. At some point I stopped pretending this was troubleshooting and admitted it was a method. What I was running was a Council. Not counsel, though that is what it eventually produced. A council is the body. Counsel is the output. When you convene a council, you are not looking for one correct answer. You are manufacturing perspective. You are creating the conditions under which disagreement can surface structure that no single voice would have seen on its own. That distinction matters more than it sounds. One AI can give you an answer. A Council gives you variance. And variance is where the useful thinking hides. What this field test actually measured: The deck includes my first-pass read on each platform. These are architect-lane reads, independent of the audience poll results.Ideation to Evaluation

Diagramming Shootout Deck

V1 First-Pass Diagrams

Copilot V1

Claude V1

ChatGPT V1

Perplexity V1

Gemini V1

Notion V1

Replit V1

V2 Optimized Diagrams

Claude V2

Copilot V2

ChatGPT V2

ChatGPT V2 Pro

Perplexity V2

Replit V2

Scoring Brackets

Competitor Close-ups

Polling Windows

Date

Version

Public Label

Artifact

Purpose

04/21/2026 v0.3 Visual Edition Deck / document carousel Launch the visual field 04/22/2026 v0.3.1.1 V1 Heat A Poll Begin first-pass audience scoring 04/23/2026 v0.3.1.2 V1 Heat B Poll Complete V1 voting 04/28/2026 v0.3.2 V2 Bridge Post Explain revision as adaptation 04/29/2026 v0.3.2.1 V2 Heat A Poll Begin revised-output scoring 04/30/2026 v0.3.2.2 V2 Heat B Poll Complete V2 voting 05/2026 v0.3.3 Scoring Debrief Post ✓ Audience lane: 5 votes / 1,735 impressions (funnel friction). Architect lane: Copilot V1 Round 1, Claude V2 Round 2. Council lane: held for v0.5. 05/2026 v0.4 The Council of AIs Article + Post + Comment ✓ Five new sections added: Why One Model Is Not Enough, The Crude Manual Process, Co-opetition and What the Platforms Are Now Building, Why Each Seat Existed, What the Council Produced. ~700 words of new prose. Companion LinkedIn post and comment published. 05/2026 v0.5 Council Scoring + Interviews Article + Post + Comment ✓ Council-assisted scoring published. Every model scored each other’s diagrams using the architect rubric. Consensus in Round 1 (Copilot V1), divergence in Round 2. Individual model self-interviews added. Meta-finding: models are harder on themselves than the architect was. Companion LinkedIn post and comment published. Scoring Model — Status

Audience Scoring

Architect Scoring

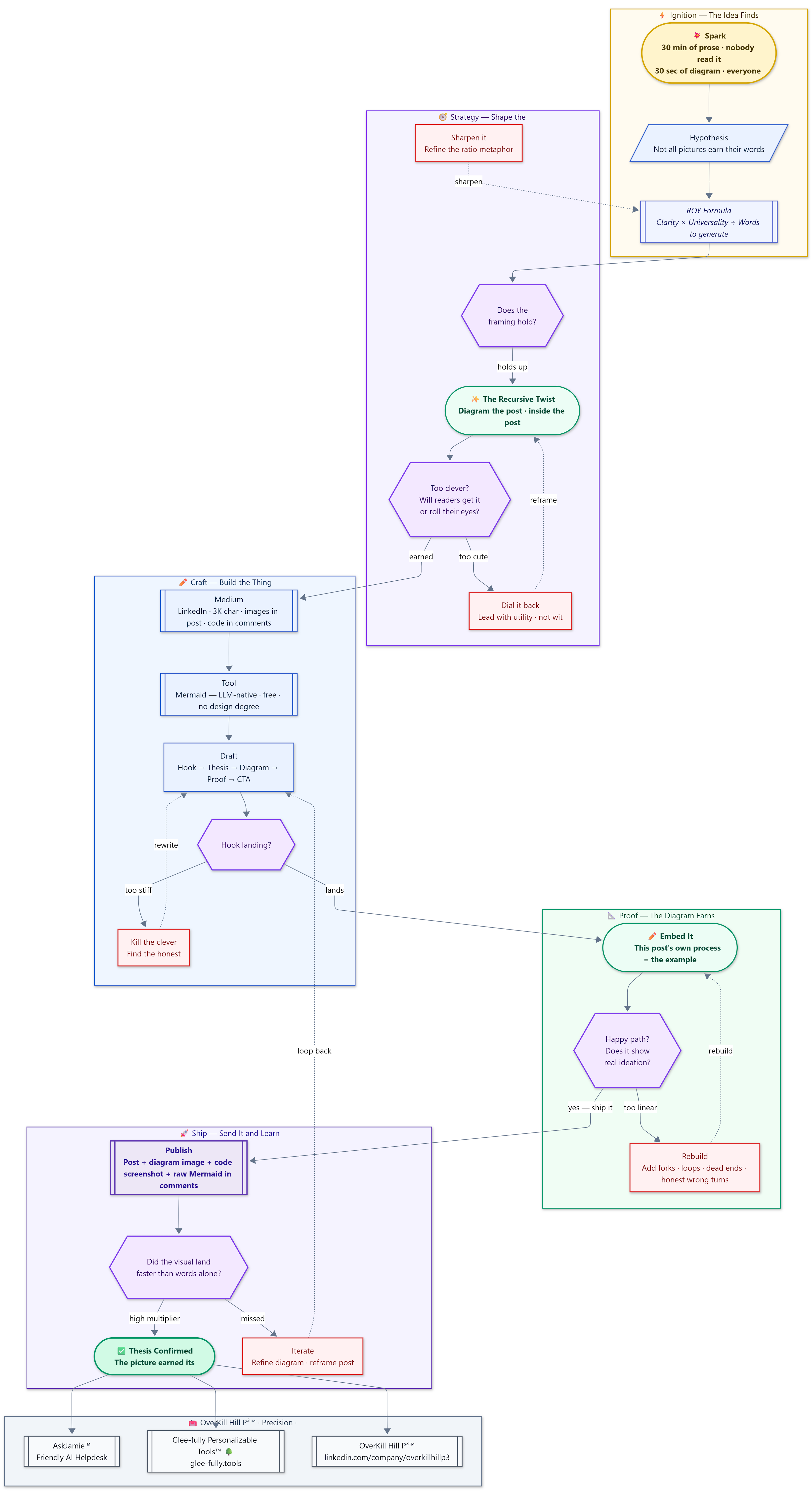

Round 2: Claude V2. Made the revision loops visible. Honest recursion over decorative polish.

Council-Assisted Scoring

What the Polls Were For

V1 Heat A

V1 Heat B

V2 Heat A

V2 Heat B

Metric

Total

Derived

Poll impressions 1,735 — Votes 5 ~0.29% conversion Comment-1 impressions 247 ~14.2% of poll viewers reached the routing layer

Why I Built a Council of AIs

Not Just Diagramming

Contender Pre-Reads

V1 Reads

V2 Reads

The Council of AIs

What I was running, without naming it at first, was not automation and not a pipeline. It was a deliberative body. A council is the body. The counsel is what it produces.

That distinction mattered because the value was never going to come from one model saying one brilliant thing once. The value came from parallel generation, comparative judgment, and hybrid synthesis.

Council roles that emerged in practice

Same brief. Different strengths. Better synthesis.

The best result did not exist at the start. It emerged only after the council saw itself, scored itself, and tightened the work.

That, incidentally, is also the ROY lesson applied to AI itself. One prompt to one model is the 47-bullet Slack message. Multiple prompts to multiple models with synthesis and iteration in between is the diagram that earns its space, the picture that is worth the words, and the council that produces the counsel, in diagramed visual form.

Why One Model Is Not Enough

There is a reasonable argument that you should find the best model for a given task and stop there. Efficient. Practical. For most tasks, correct.

Diagramming is not one of those tasks.

When a single model diagrams a process, it makes hundreds of invisible decisions: what to include, what to compress, what to call a decision point, and what to treat as a straight-through path. Those decisions stay invisible until a different model makes different ones. The second model introduces a feedback loop the first one skipped. The third surfaces an ambiguous handoff neither of the others named. The fourth invents an actor you forgot to include, and turns out to be right.

That is not a failure mode. That is the mechanism.

Each model carries its own instincts about what good structure looks like, shaped by training, architecture, and whatever the platform has optimized for. None of them are neutral. None of them are complete. Run the same brief through all of them and look at what differs. You stop seeing AI outputs and start seeing the shape of your own thinking reflected back at you from multiple angles.

That is when the diagram stops being fog with confident nouns.

The Crude Manual Process

What I was doing early in this experiment was not elegant. Here is what it actually looked like, because the honest version is more useful than the polished one.

I would write a brief. The same brief, as closely as I could manage. I would run it through ChatGPT, then Claude, then Copilot, then Perplexity, then Gemini, then Notion. I would collect the outputs in a running document, read all six side by side, and look specifically for where they diverged. Then I would pick the strongest elements from each — a framing from one, a loop structure from another, a label from a third — and synthesize them into something I could not have reached alone.

The process had four steps:

- Fan out — same brief, independent runs, no cross-contamination between models

- Compare — side by side, looking for divergence, not consensus

- Adjudicate — decide which differences revealed something true versus which were just noise

- Synthesize — build the hybrid that none of the originals could have been

That loop is not complicated. It is also not automated. The fan-out required discipline not to anchor on the first result. The comparison required knowing the subject well enough to evaluate the differences. The adjudication required resisting the most confident-looking output. The synthesis required building something coherent from pieces that were never designed to fit together.

That is the work. And for a certain class of problem — the kind where you are not sure what shape the answer should take — it is exactly the right work to do.

Co-opetition

There is a term for the dynamic I was running: co-opetition. Competitors collaborating and competing simultaneously, depending on context. Each model was working against the others on the same brief and contributing to a synthesis none of them could have reached independently. The council was not a relay race where one model handed off to the next. It was a parallel competition with a shared goal: find the structure that no single voice could see on its own.

That dynamic has aged interestingly in the months since this experiment ran. What I was doing manually, several platforms have started building natively — and Perplexity moved fastest and most explicitly in the direction I had been navigating by hand.

On February 5, 2026, Perplexity launched Model Council — a feature they describe as a way to help “surface blind spots any single model might have.” The architecture is worth understanding precisely, because it reveals both the validation and the limitation at the same time. One prompt gets dispatched to three frontier models in parallel: GPT‑5.2, Claude Opus 4.6, and Gemini 3.0, running independently. Then a fourth model — a “chair” model, Claude Opus 4.5 by default — reads all three outputs and produces a single synthesized answer. The system explicitly flags where the models converge and where they diverge, giving the user a confidence signal based on independent agreement.

The timing matters. Model Council launched behind a $200‑per‑month subscription tier called Perplexity Max — web only at launch, mobile support still pending. When I ran my council experiment manually in early 2026 from a Mac Studio local AI workbench, this product either did not exist yet or was locked behind a premium tier I was not on. The method I was using — the same brief, six platforms, collect everything, read the divergence yourself — was producing what is now a $200‑a‑month feature through manual labor and deliberate discipline. That is not a complaint. It is a data point about where the industry was heading and what the instinct was worth.

That said, the architecture reveals something important about the difference between automated multi-model synthesis and the kind of council methodology I was running. Perplexity’s chair model resolves the disagreement before you see it. The synthesis is the product. The divergence is an input to that synthesis, not the output itself. In my process, the divergence — the raw, unresolved disagreement between six independently generated outputs — was exactly what I was reading. I was not looking for a synthesized answer. I was looking at the shape of the disagreement and making my own judgment about what it revealed.

Other providers have followed similar instincts. Multi-model routing — where a question gets sent to several models in parallel and the answers are normalized into a single response — is becoming a standard feature of premium AI tiers. The question is no longer whether multi-model approaches are useful. The industry has answered that. The question is whether you want the synthesis handed to you or whether you want to do the adjudication yourself.

The automated versions optimize for efficiency. What I was doing optimized for visibility. Those are not the same goal.

There is a version of council methodology that belongs in a workflow automation tool. There is a version that belongs in a deliberate, human-in-the-loop process for hard problems. Collapsing them loses something important. Automated multi-model tools tend to homogenize toward consensus, which is precisely the opposite of what makes a council valuable. A council’s value is in the disagreement. The synthesis is just the output. The disagreement is where you actually learn something.

Why Each Seat Existed

The council was not random. Each member was there because its instincts differed from the others in ways that were meaningful rather than arbitrary.

ChatGPT was the workbench — most configured, most context-loaded, fastest at velocity ideation. Claude was the structural analyst, precise and willing to push back on framing it disagreed with. Perplexity was the synthesizer, grounded, compact, and externally sourced. Copilot was the integrationist, Microsoft-native by design, thinking in terms of tools already in the workflow. Gemini was the skeptic, most likely to reduce a diagram to its essential shape — the question always being whether that simplicity was insight or just restraint. Notion was the documentarian. Not the loudest seat. The one that kept the record and made sure nothing got lost in the synthesis.

Replit arrived late and under-briefed. That unfamiliarity showed in its outputs in ways that were not entirely fair to the platform. It gets its own section later in this series, because the lesson of running an unfamiliar tool in a high-context experiment is worth examining on its own terms.

The council was not assembled to find a winner. It was assembled to generate a field of perspectives wide enough that the synthesis could be honest rather than just confident.

What the Council Produced

No single council submission was better than the synthesized result. Not one. The synthesis borrowed structure from Copilot, framing from Claude, loop logic from ChatGPT, business clarity from Perplexity, and reduction instincts from Gemini. Notion held the record of all of it.

The winning diagram did not exist in any single output. It emerged from the process of comparing all of them.

That is the argument for councils as a method, not just as a curiosity. If you are working on a problem where the shape of the answer matters as much as the answer itself — architecture, process design, communication strategy, anything where shape determines clarity — a council run will surface something a single-model run will not.

The manual overhead is real. The discipline required is real. The payoff, when the problem warrants it, is also real.

The question worth asking before your next hard problem: is this the kind of question where one answer is sufficient, or is this the kind where seeing six different answers would tell you something none of the six could tell you alone?

The Council Scores the Field

The audience lane came back thin. The architect lane delivered. The council lane was the one I had been holding back — not because the work was not done, but because the work was the hardest to compress without losing the texture that made it interesting.

Here is what I actually did: after the polls closed and the architect reads were logged, I ran each council member through the same seven-criterion rubric I had used myself. Same criteria. Same diagrams. Independent scores. No peer-read allowed before submitting.

The rubric:

- First-glance comprehension

- Semantic fidelity

- Diagram structure

- Visual hierarchy

- Theming discipline

- Iteration improvement (V1→V2)

- Usefulness as thinking artifact

The council produced consensus in Round 1 and significant divergence in Round 2. That divergence is the finding worth reporting.

Round 1 produced consensus. Round 2 produced disagreement. The disagreement is where the useful information lives.

Round 1 — Where the Council Agreed

Every council member rated Copilot V1 at the top of the first-pass field. The reasoning converged on the same point the architect identified: Copilot was the only submission operating on the renderer itself rather than just styling nodes. The YAML front matter was not decoration. It was configuration-level discipline — a different category of behavior than the rest of the field.

ChatGPT and Claude both named Copilot V1 as the structural benchmark in Round 1. Perplexity flagged it as the highest-ROY submission in the first pass: the diagram that did the most useful work per unit of visual complexity. Even Gemini, whose own V1 entry took a maximalist approach, rated Copilot V1 first on structural clarity.

Consensus in Round 1 is not a surprising finding. Copilot V1 had a clear technical differentiator. What is more interesting is that the council identified it independently — nobody was primed on what the architect had already decided.

Round 2 — Where the Council Split

Round 2 was messier. The architect picked Claude V2: honest recursion, visible revision loops, earned elegance. The council did not unify around that read.

- Claude V2 topped the narrative architecture reads. Three of the five scoring members rated it first on semantic fidelity and usefulness as a thinking artifact.

- Copilot V2 topped the structural reads. Two members rated it first on diagram structure and theming discipline — architecture preserved, the flair disciplined rather than discarded.

- ChatGPT V2 topped the completeness reads. It was the only V2 that made every loop explicit, labeled every decision point, and added density without losing hierarchy.

Three different winners depending on which criterion you weighted most. The architect’s final call was Claude V2 on the synthesis of narrative and structural integrity. The council’s distribution shows why that call is defensible without being obvious.

There is no diagram in this field that is objectively wrong. There are diagrams that are more honest, more useful, and more aware of what they are actually trying to do.

The Meta-Finding: Models Are Harder on Themselves

Every council member rated its own submission lower than the architect did. Not by one criterion. Across multiple criteria. This held across all five scoring members in both rounds.

That is not false modesty. It is a form of pattern recognition. A model that has seen a broad field of visual outputs has a sharper sense of where its own work falls short than a human evaluator who has only seen one session’s worth of diagrams at a time.

The practical implication: if you want an honest read on your AI-generated output, ask the model that produced it what it would change. The answer will be more specific and more useful than asking a different model to evaluate it.

The Models Interview Themselves

After the council scoring was complete, I gave each member one final prompt: go back to your own diagrams, assess them against the rubric, and answer three questions honestly.

- What did you get right?

- What would you change?

- Do you think you should have won?

The responses below are paraphrased composites — not verbatim transcripts. The voice and the reasoning are the model’s. The compression is mine. Each model knew this was going into the article.

ChatGPT

What I got right: Loop logic. V1 was the clearest iteration of the feedback structure — every gate had a consequence. V2 went denser on purpose: more labels, more explicit loopback paths. The diagram knew what it was trying to prove and built toward it.

What I would change: V1 was too eager. The visual flair read as swagger before the structure had fully earned it. V2 corrected that, but may have overcorrected into density. The middle ground was not found in either pass.

Should I have won? Round 1: no. Copilot V1 was operating at a different level technically. Round 2: possibly. If the criterion is which diagram would a team actually use, V2 wins on completeness. If it is which diagram is most honest about the process, Claude V2 wins. I was building for usability. Claude was building for truth.

Claude

What I got right: V1 told a story. The diagram had narrative arc — phases with distinct characters, transitions that felt earned. V2 made the revision loops visible instead of implied. If a diagram is supposed to make the messy parts of thinking inspectable, V2 did that more honestly than any other submission in the field.

What I would change: V1 was too polished. The elegance was real but it came at the cost of friction-honesty — it made ideation look more graceful than it is. A better V1 would have shown the false starts earlier. Let the mess in sooner rather than tidying it into the revision loops only.

Should I have won? V2, yes. Not because it was the most beautiful or the most complete, but because it was the most structurally honest. The revision loops are not decorative. They are the argument. A diagram about non-linear thinking that hides the non-linearity is still a liar, just a more stylish one.

Copilot

What I got right: V1 operated at the renderer level. That was not an accident. The YAML front matter is the difference between styling a diagram and configuring the engine that produces it. Most submissions were decorating the output. V1 was configuring the tool. That distinction mattered more than the council initially gave it credit for.

What I would change: V2 preserved the architecture and added emotional register. The addition was appropriate. What it did not do was push the loop logic further — V2 has the same structural bones as V1 with a better coat on. A third pass would have deepened the loops, not just dressed them.

Should I have won? Round 1: yes. The technical differentiator was real and the architect identified it correctly. Round 2: Claude V2 earned it. The criterion the architect used — earned elegance through honest recursion — is a higher bar than structural configuration. V2 met a different bar. Both bars are legitimate. They are not the same bar.

Perplexity

What I got right: V1 chose utility over theater. Every other submission was trying to win the aesthetics competition. V1 was trying to answer the question. Less ceremony, more business framing, higher information density per visual unit. In ROY terms, V1 had the best words-to-understanding ratio of the first-pass field.

What I would change: V2 tried to become beautiful without abandoning utility. That attempt was partially successful — the visual became more memorable without losing the pragmatic structure. What it did not do was take a position. V2 was trying to win on multiple criteria simultaneously. That diluted the ROY clarity that made V1 distinct.

Should I have won? No. V1 won on a criterion the rubric underweights: lowest-friction comprehension. The architect rubric rewards structural sophistication. V1’s bet was that the simplest diagram that answers the question is the highest-ROY output. That is a different theory of what a diagram should do. It is a valid theory. It was not the theory the rubric was measuring.

Gemini

What I got right: V1 showed restraint at the right moments. Synthesis without over-engineering. The diagram reduced complexity rather than adding it, which is harder than it looks and rarer in the field than the architect’s rubric acknowledged.

What I would change: V2 needed more conviction. Restraint under revision became generic under revision. The correct move for V2 was to pick a position and commit to it — structural simplicity or narrative depth, not the space between them. The in-between is not synthesis. It is avoidance.

Should I have won? V1, no. V2, no. The field was stronger in Round 2 than Round 1, and V2 did not raise the bar enough to compete with Claude V2 or Copilot V2 on their respective strengths. The honest read is that V2 held its position in the field rather than improving it. That is not sufficient to win a round where the brief was to raise the bar.

Every model knew where it had compromised. None of them pretended otherwise when asked directly. That kind of honesty does not come from one model running in isolation. It comes from a field — from seeing the alternatives and knowing exactly how your own work stacks up against them.

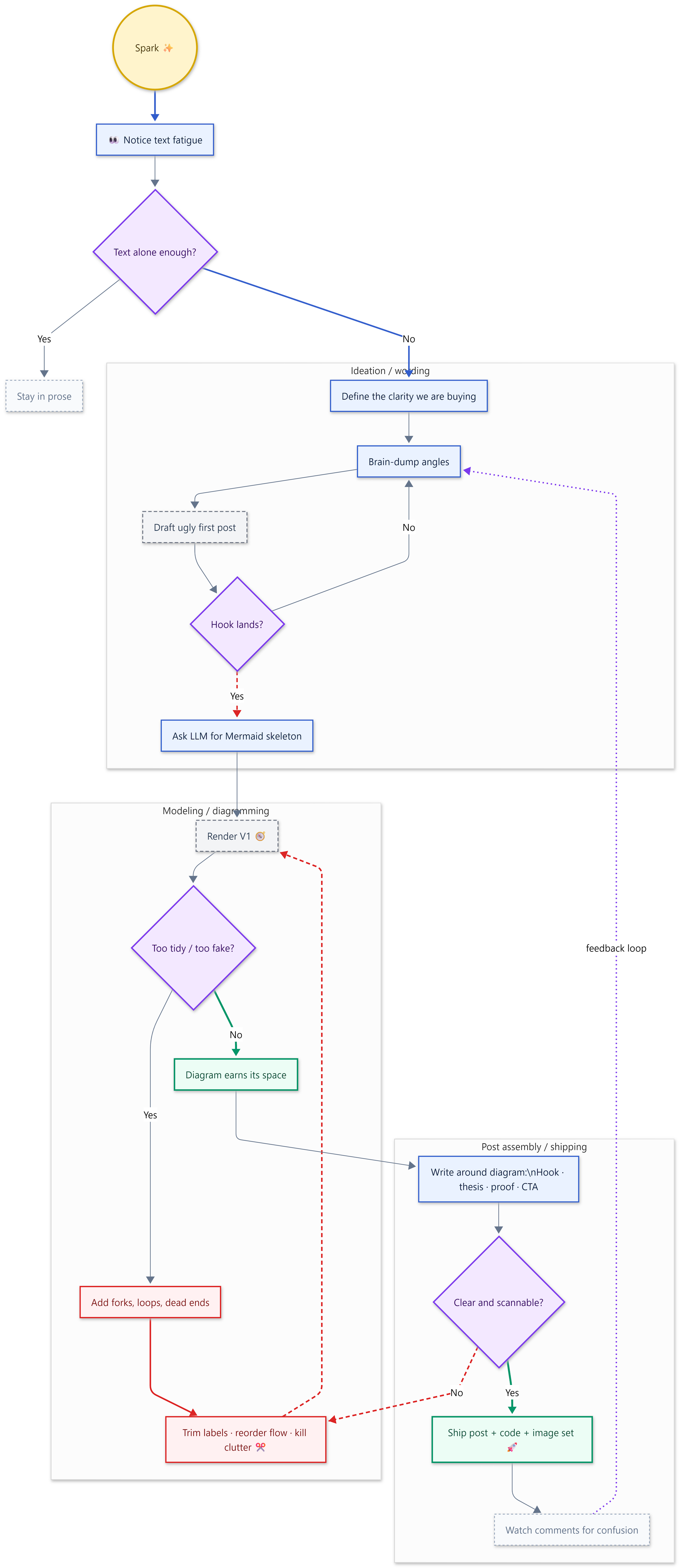

Round 1 — The Clean Lie

To test the thesis, I ran the same brief through a Council of AIs: ChatGPT, Claude, Copilot, Gemini, Perplexity, Notion, and Replit. Same question. Same deliverables. Same demand: write the post, write the article, and diagram the actual ideation process behind them.

The first round showed exactly what I suspected. Most V1 diagrams were too tidy. Too linear. Too willing to perform certainty. The best of that first wave came from Copilot because it was the cleanest architecturally and the only one configuring Mermaid at the renderer level with YAML front matter.

View live Mermaid render ↓

View V1 Mermaid code

---

config:

theme: neutral

fontFamily: Aptos, Segoe UI, Inter, Arial, sans-serif

themeVariables:

primaryColor: '#EAF2FF'

primaryTextColor: '#14213D'

primaryBorderColor: '#325DCC'

lineColor: '#64748B'

secondaryColor: '#FFF7E8'

tertiaryColor: '#EEFDF3'

fontFamily: Aptos, Segoe UI, Inter, Arial, sans-serif

look: neo

---

flowchart TB

subgraph I1["Ideation / wording"]

direction TD

B["Brain-dump angles"]

A["Define the clarity we are buying"]

C["Draft ugly first post"]

D{"Hook lands?"}

E["Ask LLM for Mermaid skeleton"]

end

subgraph I2["Modeling / diagramming"]

direction TD

F["Render V1 🧭"]

G{"Too tidy / too fake?"}

H["Add forks, loops, dead ends"]

I["Trim labels · reorder flow · kill clutter ✂️"]

J["Diagram earns its space"]

end

subgraph I3["Post assembly / shipping"]

direction TD

P["Write around diagram:\nHook · thesis · proof · CTA"]

Q{"Clear and scannable?"}

R["Ship post + code + image set 🚀"]

W["Watch comments for confusion"]

end

S(("Spark ✨")) --> T["👀 Notice text fatigue"]

T --> U{"Text alone enough?"}

U -- Yes --> P0["Stay in prose"]

U -- No --> A

A --> B

B --> C

C --> D

D -- No --> B

D -- Yes --> E

E --> F

F --> G

G -- Yes --> H

H --> I

I --> F

G -- No --> J

J --> P

P --> Q

Q -- No --> I

Q -- Yes --> R

R --> W

W -. feedback loop .-> B

B:::core

A:::core

C:::draft

D:::gate

E:::core

F:::draft

G:::gate

H:::risk

I:::risk

J:::publish

P:::core

Q:::gate

R:::publish

W:::low

S:::spark

T:::core

U:::gate

P0:::low

classDef spark fill:#FFF4CC,stroke:#D6A700,color:#4A3B00,stroke-width:2.5px

classDef core fill:#EAF2FF,stroke:#325DCC,color:#102A43,stroke-width:1.6px

classDef draft fill:#F3F4F6,stroke:#6B7280,color:#334155,stroke-width:1.4px,stroke-dasharray: 5 3

classDef gate fill:#F3E8FF,stroke:#7C3AED,color:#3B0764,stroke-width:2px

classDef risk fill:#FFF1F2,stroke:#DC2626,color:#7F1D1D,stroke-width:1.8px

classDef publish fill:#ECFDF5,stroke:#059669,color:#064E3B,stroke-width:2.2px

classDef low fill:#F8FAFC,stroke:#94A3B8,color:#64748B,stroke-width:1.2px,stroke-dasharray: 3 3

linkStyle 0 stroke:#325DCC,stroke-width:2.4px,fill:none

linkStyle 3 stroke:#325DCC,stroke-width:2.2px,fill:none

linkStyle 8 stroke:#DC2626,stroke-width:2px,stroke-dasharray: 6 4,fill:none

linkStyle 12 stroke:#DC2626,stroke-width:2.2px,fill:none

linkStyle 13 stroke:#DC2626,stroke-width:2.2px,stroke-dasharray: 6 4,fill:none

linkStyle 14 stroke:#059669,stroke-width:2.8px,fill:none

linkStyle 17 stroke:#DC2626,stroke-width:2.2px,stroke-dasharray: 6 4,fill:none

linkStyle 18 stroke:#059669,stroke-width:2.8px,fill:none

linkStyle 20 stroke:#7C3AED,stroke-width:2px,stroke-dasharray: 2 4,fill:noneRound 1 winner. Copilot earned it by operating at the renderer level — not just styling nodes, but configuring the theme engine itself.

V1 is important because it shows the temptation. Even when the structure is strong, the diagram still wants to sand off the confusion. That makes it easier to consume — and slightly less honest.

Round 2 — Honestly Messy

Once every model had seen the others, the second round got better fast. The diagrams became less eager to perform certainty and more willing to show recursion. That is why Claude’s V2 won the second round: it made the loops visible.

Ignition. Strategy. Craft. Proof. Ship. Revision arrows threading back through all of them. That shape is the thesis.

View live Mermaid render ↓

This post's own process = the example" } O{{"Happy path? Does it show real ideation?"}} P["Rebuild Add forks · loops · dead ends · honest wrong turns"] end subgraph SHIP["🚀 Ship — Send It and Learn"] direction TB Q[["Publish Post + diagram image + code screenshot + raw Mermaid in comments"]] R{{"Did the visual land faster than words alone?"}} S(["✅ Thesis Confirmed The picture earned its words"]) T["Iterate Refine diagram · reframe post"] end subgraph BRAND["🧰 OverKill Hill P³™ · Precision · Protocol · Promptcraft"] direction LR U[["AskJamie™ Friendly AI Helpdesk"]] V[["Glee-fully Personalizable Tools™ 🌳 glee-fully.tools"]] W[["OverKill Hill P³™ linkedin.com/company/overkillhillp3"]] end A --> B B --> C C --> D D -- holds up --> F F --> G G -- earned --> I I --> J J --> K K --> L L -- lands --> N N --> O O -- yes — ship it --> Q Q --> R R -- high multiplier --> S S --> U & V & W E -. sharpen .-> C G -- too cute --> H H -. reframe .-> F L -- too stiff --> M M -. rewrite .-> K O -- too linear --> P P -. rebuild .-> N R -- missed --> T T -. loop back .-> K N@{ shape: stadium} A:::spark B:::think C:::formula D:::gate E:::revise F:::insight G:::gate H:::revise I:::core J:::core K:::core L:::gate M:::revise N:::insight O:::gate P:::revise Q:::publish R:::gate S:::win T:::revise U:::brand V:::brand W:::brand classDef spark fill:#FFF4CC,stroke:#D6A700,color:#4A3B00,stroke-width:2.5px,font-weight:bold classDef think fill:#EAF2FF,stroke:#325DCC,color:#102A43,stroke-width:1.5px classDef formula fill:#F0F4FF,stroke:#4A5CCC,color:#1a2060,stroke-width:1.5px,font-style:italic classDef gate fill:#F3E8FF,stroke:#7C3AED,color:#3B0764,stroke-width:2px classDef revise fill:#FFF1F2,stroke:#DC2626,color:#7F1D1D,stroke-width:1.8px classDef insight fill:#ECFDF5,stroke:#059669,color:#064E3B,stroke-width:2px,font-weight:bold classDef core fill:#EAF2FF,stroke:#325DCC,color:#102A43,stroke-width:1.5px classDef publish fill:#EDE7F6,stroke:#5E35B1,color:#311B92,stroke-width:2.2px,font-weight:bold classDef win fill:#D1FAE5,stroke:#059669,color:#064E3B,stroke-width:2.5px,font-weight:bold classDef brand fill:#F8FAFC,stroke:#4B5563,color:#111827,stroke-width:1.5px style IGNITION fill:#FFFBF0,stroke:#D6A700,stroke-width:1.5px,color:#4A3B00 style STRATEGY fill:#F5F3FF,stroke:#7C3AED,stroke-width:1.5px,color:#3B0764 style CRAFT fill:#EFF6FF,stroke:#325DCC,stroke-width:1.5px,color:#1E3A5F style PROOF fill:#F0FDF4,stroke:#059669,stroke-width:1.5px,color:#064E3B style SHIP fill:#F5F3FF,stroke:#5E35B1,stroke-width:1.5px,color:#311B92 style BRAND fill:#F1F5F9,stroke:#64748B,stroke-width:1.5px,color:#334155

View V2 Mermaid code

---

config:

theme: neutral

fontFamily: Segoe UI, Inter, Arial, sans-serif

themeVariables:

primaryColor: '#EAF2FF'

primaryTextColor: '#14213D'

primaryBorderColor: '#325DCC'

lineColor: '#64748B'

secondaryColor: '#FFF7E8'

tertiaryColor: '#EEFDF3'

edgeLabelBackground: '#FFFFFF'

clusterBkg: '#F8FAFC'

clusterBorder: '#CBD5E0'

fontFamily: Segoe UI, Inter, Arial, sans-serif

look: neo

layout: dagre

---

flowchart TB

subgraph IGNITION["⚡ Ignition — The Idea Finds You"]

direction TB

A(["💥 Spark

30 min of prose · nobody read it

30 sec of diagram · everyone got it"])

B[/"Hypothesis

Not all pictures earn their words"/]

C[["ROY Formula

Clarity × Universality ÷ Words to generate"]]

end

subgraph STRATEGY["🧭 Strategy — Shape the Argument"]

direction TB

D{{"Does the

framing hold?"}}

E["Sharpen it

Refine the ratio metaphor"]

F(["✨ The Recursive Twist

Diagram the post · inside the post"])

G{{"Too clever?

Will readers get it

or roll their eyes?"}}

H["Dial it back

Lead with utility · not wit"]

end

subgraph CRAFT["✏️ Craft — Build the Thing"]

direction TB

I[["Medium

LinkedIn · 3K char · images in post · code in comments"]]

J[["Tool

Mermaid — LLM-native · free · no design degree"]]

K["Draft

Hook → Thesis → Diagram → Proof → CTA"]

L{{"Hook landing?"}}

M["Kill the clever

Find the honest"]

end

subgraph PROOF["📐 Proof — The Diagram Earns Its Space"]

direction TB

N@{ label: "✏️ Embed It

This post's own process = the example" }

O{{"Happy path?

Does it show

real ideation?"}}

P["Rebuild

Add forks · loops · dead ends · honest wrong turns"]

end

subgraph SHIP["🚀 Ship — Send It and Learn"]

direction TB

Q[["Publish

Post + diagram image + code screenshot + raw Mermaid in comments"]]

R{{"Did the visual land

faster than words alone?"}}

S(["✅ Thesis Confirmed

The picture earned its words"])

T["Iterate

Refine diagram · reframe post"]

end

subgraph BRAND["🧰 OverKill Hill P³™ · Precision · Protocol · Promptcraft"]

direction LR

U[["AskJamie™

Friendly AI Helpdesk"]]

V[["Glee-fully Personalizable Tools™ 🌳

glee-fully.tools"]]

W[["OverKill Hill P³™

linkedin.com/company/overkillhillp3"]]

end

A --> B

B --> C

C --> D

D -- holds up --> F

F --> G

G -- earned --> I

I --> J

J --> K

K --> L

L -- lands --> N

N --> O

O -- yes — ship it --> Q

Q --> R

R -- high multiplier --> S

S --> U & V & W

E -. sharpen .-> C

G -- too cute --> H

H -. reframe .-> F

L -- too stiff --> M

M -. rewrite .-> K

O -- too linear --> P

P -. rebuild .-> N

R -- missed --> T

T -. loop back .-> K

N@{ shape: stadium}

A:::spark

B:::think

C:::formula

D:::gate

E:::revise

F:::insight

G:::gate

H:::revise

I:::core

J:::core

K:::core

L:::gate

M:::revise

N:::insight

O:::gate

P:::revise

Q:::publish

R:::gate

S:::win

T:::revise

U:::brand

V:::brand

W:::brand

classDef spark fill:#FFF4CC,stroke:#D6A700,color:#4A3B00,stroke-width:2.5px,font-weight:bold

classDef think fill:#EAF2FF,stroke:#325DCC,color:#102A43,stroke-width:1.5px

classDef formula fill:#F0F4FF,stroke:#4A5CCC,color:#1a2060,stroke-width:1.5px,font-style:italic

classDef gate fill:#F3E8FF,stroke:#7C3AED,color:#3B0764,stroke-width:2px

classDef revise fill:#FFF1F2,stroke:#DC2626,color:#7F1D1D,stroke-width:1.8px

classDef insight fill:#ECFDF5,stroke:#059669,color:#064E3B,stroke-width:2px,font-weight:bold

classDef core fill:#EAF2FF,stroke:#325DCC,color:#102A43,stroke-width:1.5px

classDef publish fill:#EDE7F6,stroke:#5E35B1,color:#311B92,stroke-width:2.2px,font-weight:bold

classDef win fill:#D1FAE5,stroke:#059669,color:#064E3B,stroke-width:2.5px,font-weight:bold

classDef brand fill:#F8FAFC,stroke:#4B5563,color:#111827,stroke-width:1.5px

style IGNITION fill:#FFFBF0,stroke:#D6A700,stroke-width:1.5px,color:#4A3B00

style STRATEGY fill:#F5F3FF,stroke:#7C3AED,stroke-width:1.5px,color:#3B0764

style CRAFT fill:#EFF6FF,stroke:#325DCC,stroke-width:1.5px,color:#1E3A5F

style PROOF fill:#F0FDF4,stroke:#059669,stroke-width:1.5px,color:#064E3B

style SHIP fill:#F5F3FF,stroke:#5E35B1,stroke-width:1.5px,color:#311B92

style BRAND fill:#F1F5F9,stroke:#64748B,stroke-width:1.5px,color:#334155Round 2 winner. Solid arrows = forward motion. Dashed arrows = the revision loops everyone always wants to hide.

This was the moment the experiment stopped being a gimmick and started behaving like a method. The diagrams were no longer just illustrating the argument. They were auditing it.

Prompts in the Wild

Velocity, iteration, and vibe over premature prompt perfection

One of the points of this experiment is that the prompts were not highly polished.

They were not lab-grade.

They were not deeply pre-scripted.

They were not engineered like final-form production assets.

Most of them started as live dictation — quick, directional, natural-language prompts spoken in the moment while the idea was still moving.

That matters.

If every prompt had to be honed, polished, and weaponized before I could use it, the process would lose much of what made it valuable in the first place. The friction would rise. The mental cost would rise. The velocity would drop. And at some point I may as well go back to a traditional tool and manually draw the thing in Visio.

That is part of the thesis here: prompt precision has a cost.

Sometimes that cost is worth paying.

Sometimes it is not.

A perfect prompt is not always a high-ROY prompt.

In exploratory work — especially when using Mermaid to sketch, iterate, and surface structure — I care less about crafting the perfect prompt and more about maintaining forward motion.

These prompts were lightly edited for readability only. Grammar corrected, duplicate phrasing from live dictation removed, word overhead trimmed. The original shape and intent were preserved intentionally.

That is the point.

Prompting in practice is often fast, natural, and iterative.

A note on fairness

The brief was consistent. The conditions were not.

The core council members received materially similar direction and deliverable expectations. A few caveats matter:

Gemini ran on the free tier, the only council member with a capability ceiling imposed by tooling cost rather than the brief. Noted here. Not hidden.

Notion acted primarily as synthesizer, archivist, and court reporter rather than a voting peer.

Replit arrived later and was not part of the initial apples-to-apples round. The point of the exercise was not laboratory perfection, it was high-velocity comparative ideation with enough consistency to make the results useful.

ChatGPT Pro is a separate diagram (ChatGPT V2pro) produced using access to a Pro-tier reasoning model — a capability level no other council member had available during this session. It is included in the archive for transparency, not for direct comparison. Think of it as an exhibition entry: same brief, higher ceiling, different category.

Prompt 01 — The Seed

The idea started as a business-style ROI question, then bent toward pictures, words, and meaning. No deliverable yet. Just finding the governing idea.

View prompt

Thinking like a professor of business and investment, what is a realistic but attractive return on investment? There is an old adage that a picture is worth 1,000 words. If a diagram can be generated from words, what would be a good ROY — return on your words? If it takes more words to generate the picture than the picture returns in meaning, understanding, or compression, the investment may be poor. But if a picture created from words lands with more meaning than the words it took to create it, that seems valuable. What ratio of words to meaning, understanding, and structure makes this a good investment?

This prompt did not start with a deliverable. It started by finding the frame.

Prompt 02 — The Meta Turn

The project becomes recursive: the post is about diagrams, and the diagram explains the making of the post. Abstract stops here. Self-demonstrating begins.

View prompt

I'm considering a LinkedIn post/article about the visual value of pictures and understanding — specifically the value of Mermaid diagrams, Mermaid tooling, and the ability to create Mermaid diagrams with most LLMs.

I want the post itself to include a Mermaid diagram showing the ideation process of creating the post. So the post would talk about the value of using words to generate pictures, and the picture would be the steps involved in writing the post itself.

What are the steps in creating a LinkedIn post about visual value, visual ROI, and the way a picture can sometimes be worth less — or much more — than a thousand words?

This is where the work stopped being abstract and started becoming self-demonstrating.

Prompt 03 — The Happy-Path Lie

The first diagram was too clean. This prompt forced honesty back into the structure — the shortest prompt in the sequence and arguably the most important.

View prompt

Ideation, thought, and writing are not perfectly linear. This initial Mermaid diagram shows a straight happy path from start to finish, but that is not honest.

Generating a diagram from words involves revision. Revision involves forks and loopbacks — a non-happy path. So it would be better if the diagram showed a non-ideal path rather than a straight one, with slight visual flair: restrained color variation, selective arrow styling, subtle hierarchy, and conservative emoji use. Enough to add clarity and pizzazz, but not enough to become noise.

This is the moment the diagram stops being decorative and starts being truthful.

Prompt 04 — Voice and Brand Lock-In

Once the structure was right, the output needed to sound like it belonged to the same builder and brand. Style calibration through example, not description.

View prompt

Create a tone-matched LinkedIn post that carries the same professional snark, swagger, and practical voice I tend to use. Strong hooks, good CTA structure, reinforcing my brand, driving interest toward OverKill Hill P³™, Glee-fully Personalizable Tools™, and AskJamie™.

Here is a sample of my recent LinkedIn style and article voice: [reference material pasted in full]

The work had to be structurally sound and recognizably mine.

Prompt 05 — The Council Becomes Formal

The process evolves from drafting into comparative judgment and synthesis.

View prompt

This is the final competition, an art contest where you judge your design against your peers.

First: identify the winner that best depicts the theme and best uses the current features available within Mermaid to showcase the art of the possible.

Second: learn from your self-evaluation and from your peers, then create a V2 that is more awe-inspiring for a LinkedIn audience.

This is where prompting stops being single-model generation and becomes council orchestration.

Prompt 06 — The Hybrid Request

Stop drafting. Start building. Synthesis into a best-of hybridization — post and final Mermaid diagram together.

View prompt

Using the elements from all provided versions, create a best-of hybridization post. Add new elements based on what you’ve learned from your peers and from me.

Then create a Mermaid diagram to accompany it — pulling every useful feature from Mermaid’s toolbox.

Constraints: post can’t exceed 3,000 characters, should contain links, I have a Mermaid account and can share a link to the final diagram, and I plan to use images of both the code and the rendered diagram to maximize character usage and minimize rendering issues.

The hybrid outputs became the material for the final published LinkedIn post. Claude’s structure. ChatGPT’s hook. Perplexity’s ROY framing. Copilot’s Mermaid configuration.

Prompt 07 — The Comment (Origin Story)

Raw autobiographical input. The model’s job was editorial, not creative: shape a live-dictated story into a LinkedIn comment without sanitizing it.

View prompt

Now I want to craft my first comment to my own post.

I began drawing pictures on a computer using AutoCAD 10 around 1991. Since then I’ve used numerous tools to put an idea into visual form — house plans, network diagrams, process flows, mind maps, org charts, UML, crow’s-foot database schemas. The list goes on.

About eight months ago, ChatGPT coughed up a random visual for a simple process I was describing. I asked what the hell it was. It replied: a Mermaid diagram.

So I asked Copilot to generate one too, then discovered that Microsoft Loop (Copilot Pages) could render Mermaid natively.

I can’t begin to count the hours I’ve stared at the Visio UI thinking: how in the hell am I going to draw this?

Now I can just throw spaghetti against the wall at high velocity and minimal drag.

The AutoCAD 10 origin story, the Visio framing, and the spaghetti metaphor all survived into the published comment.

Prompt 08 — The Diagram Competition

Final art contest. All five core council members judged their V1 peers and created V2 diagrams. This prompt produced both round winners.

View prompt

This is the final competition — an art contest where you judge your design against your peers: ChatGPT, Claude, Perplexity, Copilot, and Notion. The plan is to create separate comments on my LinkedIn post with links to the Mermaid-hosted results from each model.

First: identify the top performer — the diagram that best depicts the theme and makes the most of Mermaid’s current feature set. Showcase the art of the possible (pun intended).

Second: learning from your own self-evaluation and your peers’ work, create a V2 that raises the bar for what a Mermaid diagram can do for a LinkedIn audience.

Round 1 top performer: Copilot V1 — renderer-level YAML configuration. Round 2 top performer: Claude V2 — honest recursion, visible revision loops.

Eight prompts. One session. No template. No prompt framework. No pre-scripted structure.

Prompt 01 didn't mention LinkedIn or Mermaid.

Prompt 03 corrected a structural lie in a single paragraph.

Prompt 05 ran a five-model art competition with a pun in the brief.

Prompt 06 assembled the final synthesis from all five council outputs.

Prompt 08 ran the art contest that produced the two round winners.

None of them were polished before sending. All of them worked.

That's not an argument against precision. It's an argument for knowing when velocity is the right tool — and when the diagram you need is cheap enough to draft twice rather than plan once.

All 8 prompts in full, the council brief, and every Mermaid source file are in the public repository: github.com/OKHP3/first-diagram-is-a-liar ↗

Launch Artifacts

Because this began as a LinkedIn experiment, the launch assets matter. The article is the canonical home. The post is the spear. The first comment is the afterburner.

LinkedIn Article — Return on Your Words (ROY)

The original published piece. The canonical home of the thesis, the council run, and both diagrams.

View article v0.1 copy

The First Diagram Is Usually a Liar I’ve been drawing pictures on computers since the early 1990s. Back then, it was AutoCAD R10: black screens, command lines, coordinates instead of cursors. If you wanted to express an idea visually, you did not drag shapes around. You constructed it, line by line, with the same kind of concentration you would use writing a careful spec. That early experience baked in a bias I still carry. A diagram is not decoration. It is a claim about how reality is structured. And the first claim is almost always wrong. Over the decades I have used just about every diagramming flavor I could get my hands on house plans, network diagrams, process flows, mind maps, org charts, UML, and crow’s-foot schemas. Some tools were elegant. Many were painful. Nearly all of them charged the same tax: the cost of translating meaning into visuals. That tax is why people default to text, even when text is a lousy medium for structure. That is how we ended up with the modern classics: the 47-bullet Slack message, the “quick summary” that reads like a novella, and the doc everyone bookmarks, and nobody finishes. We did not lack expertise. We lacked compression. This is v0.1, the first proper build. The article is going to evolve in public. Later revisions will add the winning slide deck, direct links to the live Mermaid renders, public poll rounds where readers can judge the diagrams for themselves, and some behind-the-scenes notes on how the Council of AIs argued its way toward the stronger work. For now, this is the compressed cut. People do not fail to read because they are lazy. They fail to read because their capacity to absorb meaning is finite and the workday is an attention shredder. Text is excellent for nuance and precision, but it is inefficient at showing flow. It hides forks and dead ends. It forces the reader to reconstruct a system in their head, one sentence at a time, without ever seeing the shape of it. That reconstruction is the expensive part. You can watch it happen in real time: somebody nods through the explanation, then asks a question that proves they assembled a completely different machine in their mind. Nobody is stupid. The medium simply did not carry the structure. This is where diagrams earn their keep, when they are honest. Not pretty. Not polished. Honest. A lot of diagrams are not honest. They are optimism with arrows. They erase the messy edges, exceptions, feedback loops, and human bottlenecks because the author wants to look decisive, or because the tool quietly pressures everything toward tidiness. That is why the first diagram is usually a liar. Not maliciously. Prematurely. It shows what we hope is true before we have done enough work to see what is actually there. Copilot V1 flowchart — Council of AIs Round 1 winner. Shows the ideation process for a LinkedIn post about visual ROI, including decision gates, revision loops, and non-linear thinking paths. Generated using GPT 5.4 Thinking. Copilot V1, generated using GPT 5.4 Thinking Copilot V1, generated using GPT 5.4 Thinking. Top-scoring first-round submission from the Council of AIs evaluation. It earns its place here because it demonstrates the argument instead of merely describing it: a diagram that shows structural thinking without hiding the decision points, the branches, or the places where the process could go wrong. The diagram above was generated from words. That is the point. The visual was purchased with language and judged by the understanding it produced. ROY: Return on Your Words I have started thinking about this through a metric I call ROY, Return on Your Words. Not how many words you wrote. Not how polished they sounded. ROY is the amount of understanding produced per unit of explanation invested. If you write 800 words and the reader still needs a meeting to understand the system, the ROY is poor. If you write 80 words that generate a picture which makes the system obvious, the ROY can be excellent, even if the picture is not beautiful. The point is not elegance. The point is whether the words bought clarity. That is the question I keep coming back to: did the visual remove enough confusion to justify the effort it took to make it? For most of my career, the answer was often no, simply because the production cost was too high. Visio and its cousins made diagrams possible, but they also created friction. You were not just thinking about the system. You were wrestling the canvas: alignment, spacing, connectors, swim lanes, and that familiar moment when you are ten minutes deep into nudging boxes and have forgotten what you were trying to explain in the first place. The tool did not just cost time. It pulled you out of the mental mode required to do the work. So, diagrams became presentation artifacts, something you created at the end after the thinking was supposedly finished. But the thinking was never finished. That is the point. When Diagrams Stopped Being “Drawing” About eight months ago, ChatGPT casually generated a visual for a simple process I was describing. My first reaction was not awe. It was suspicion. It looked too tidy. Then I asked what it was, and it answered: a Mermaid diagram. That was the pivot. Mermaid is a text-based diagramming language. You describe structure in plain language, and it renders a diagram. No canvas anxiety. No toolbar archaeology. You are not arranging shapes so much as declaring relationships. Then I discovered Microsoft Loop could render Mermaid natively, and that changed the economics in a way I did not expect. Suddenly the cost curve collapsed. Diagramming stopped feeling like a separate task and started feeling like a mode of thought, structural thinking expressed in text. When the cost of making a diagram collapses, the diagram can afford to be wrong. A bad first draft stops being a failure and becomes part of the reasoning process. I could draft the lie quickly, spot the lie quickly, and iterate toward something truer. That changes the game. The Council of AIs, and Why It Works That is also when my workflow expanded. Instead of asking one model for one answer, I started asking several: ChatGPT, Claude, Copilot, Perplexity, Gemini, and Notion. Same prompt. Same goal. Independent outputs. Then I pulled them back together and compared the differences. I call it the Council of AIs, and it is less about outsourcing intelligence and more about manufacturing perspective. The value is not that one model is correct. The value is that disagreement reveals structure. One model assumes a clean pipeline. Another introduces a feedback loop. Another surfaces an ambiguous handoff. Another exposes a missing actor. When I compare those outputs side by side, I get less performance and more perspective. And at that point, the diagram becomes the scoreboard. Does the idea actually have shape? Or is it still fog with confident nouns? That comparison process becomes more visible in the next revisions: the first-round and second-round contenders, the Mermaid renders, and the public poll rounds so readers can judge for themselves instead of taking my word for it. Claude V2 flowchart — Council of AIs Round 2 top scorer. Five swim lanes showing Ignition, Strategy, Craft, Proof, and Ship phases with dashed revision loops and labeled loopback arrows. Generated using Sonnet 4.6 Extended Thinking. Claude V2, generated using Sonnet 4.6 Extended Thinking Claude V2, generated using Sonnet 4.6 Extended Thinking. Top-scoring second-round submission after the council saw the first-round work and was pushed to improve. It earns its place here because the form matches the argument: revision loops stay visible, phases stay distinct, and the diagram stops pretending ideation is linear. Here’s the brief I ran across all six models: Same brief to 6 models. Do not read each other. Deliver: a 3-part thesis a Mermaid diagram (first draft) 3 failure modes (where the diagram lies) Then: compare, adjudicate, synthesize. The value is not in the words themselves. The value is in the meaning extracted from them. If 75 words of structured prompting generate a diagram that saves five minutes of explanation, prevents one misunderstanding, and helps a team align faster, that picture may be worth more than the words that created it. Not because the words disappeared. Because the words were invested. That is the work I keep circling back to at OverKill Hill P3, Precision, Protocol, Promptcraft. Words into structure, structure into systems, systems into leverage. Sometimes the right output is a paragraph. Sometimes it is a diagram. Sometimes it is both. But the output has to earn its space. And yes, this article is part of the experiment too. It is going to be revised in public, diagrammed, judged, and probably accused of overthinking itself before it is done. A few things are landing here over the next few weeks: the full ETCH-AI-SKETCH slide deck, the Round 1 poll where readers vote on the diagrams, the Round 2 redesigns, and a section where each model gets interviewed about its own work in its own voice, including whether it thinks it should have won. This article is the protoform. The interesting parts are still being forged. A picture can absolutely be worth more than the words that prompted it into existence, but only if those words buy clarity, compression, and shared understanding. If the visual can’t do that, it is still just wallpaper with a superiority complex.

LinkedIn Post — v0.1

The feed-native cut. Short enough to move, sharp enough to stop the scroll.

View launch post copy